线性回归与使用线性核的核回归等价,无需进行高维转换,保持计算效率。

原文标题:线性回归,核技巧和线性核

原文作者:数据派THU

冷月清谈:

怜星夜思:

2、除了文中提到的RBF核,还有哪些常用的核函数?它们各自的优缺点是什么?

3、文章证明了线性核在简单线性回归中是“无用的”。那么,在更复杂的回归模型,例如多项式回归或岭回归中,使用线性核是否还有意义?

原文内容

来源:DeepHub IMBA本文约2700字,建议阅读5分钟

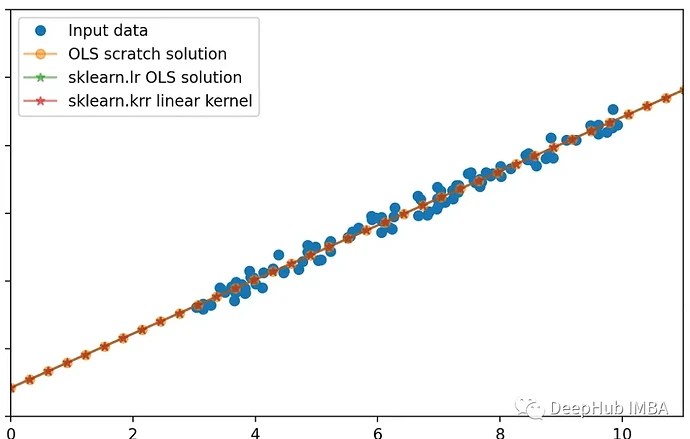

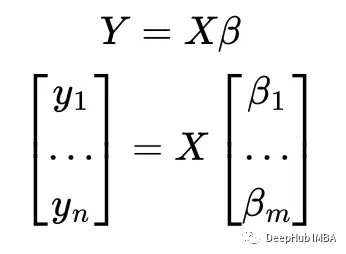

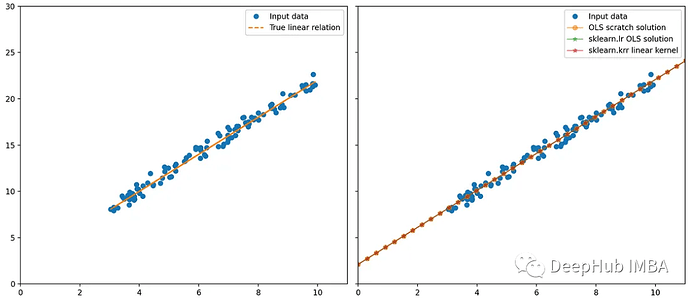

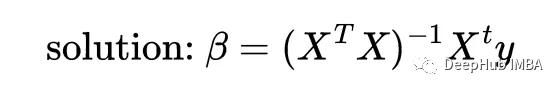

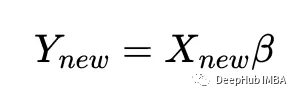

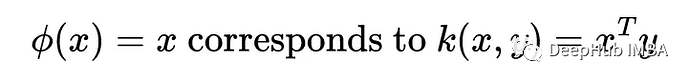

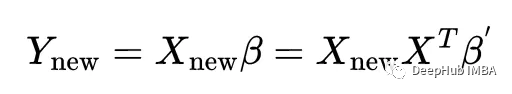

在这篇文章中,我想展示一个有趣的结果:线性回归与无正则化的线性核ridge回归是等价的。

线性回归

%matplotlib qt

import numpy as np

import matplotlib.pyplot as plt

from sklearn.linear_model import LinearRegression

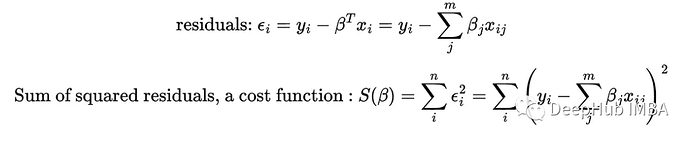

np.random.seed(0)

n = 100

X_ = np.random.uniform(3, 10, n).reshape(-1, 1)

beta_0 = 2

beta_1 = 2

true_y = beta_1 * X_ + beta_0

noise = np.random.randn(n, 1) * 0.5 # change the scale to reduce/increase noise

y = true_y + noise

fig, axes = plt.subplots(1, 2, squeeze=False, sharex=True, sharey=True, figsize=(18, 8))

axes[0, 0].plot(X_, y, "o", label="Input data")

axes[0, 0].plot(X_, true_y, '--', label='True linear relation')

axes[0, 0].set_xlim(0, 11)

axes[0, 0].set_ylim(0, 30)

axes[0, 0].legend()

# f_0 is a column of 1s

# f_1 is the column of x1

X = np.c_[np.ones((n, 1)), X_]

beta_OLS_scratch = np.linalg.inv(X.T @ X) @ X.T @ y

lr = LinearRegression(

fit_intercept=False, # do not fit intercept independantly, since we added the 1 column for this purpose

).fit(X, y)

new_X = np.linspace(0, 15, 50).reshape(-1, 1)

new_X = np.c_[np.ones((50, 1)), new_X]

new_y_OLS_scratch = new_X @ beta_OLS_scratch

new_y_lr = lr.predict(new_X)

axes[0, 1].plot(X_, y, 'o', label='Input data')

axes[0, 1].plot(new_X[:, 1], new_y_OLS_scratch, '-o', alpha=0.5, label=r"OLS scratch solution")

axes[0, 1].plot(new_X[:, 1], new_y_lr, '-*', alpha=0.5, label=r"sklearn.lr OLS solution")

axes[0, 1].legend()

fig.tight_layout()

print(beta_OLS_scratch)

print(lr.coef_)

[[2.12458946]

[1.99549536]]

[[2.12458946 1.99549536]]

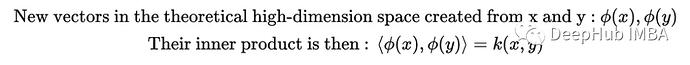

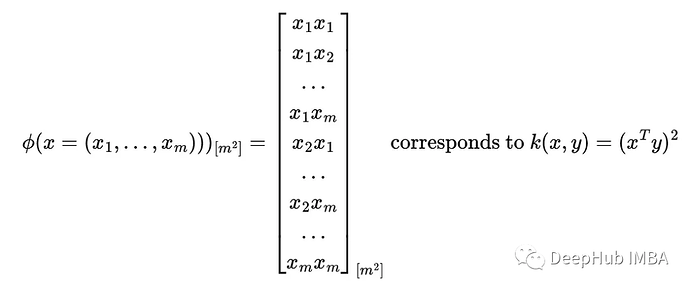

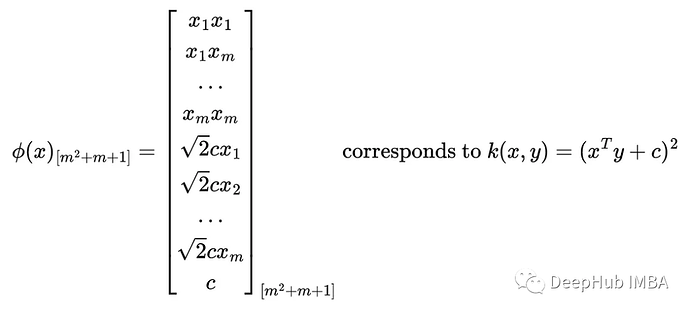

核技巧 Kernel-trick

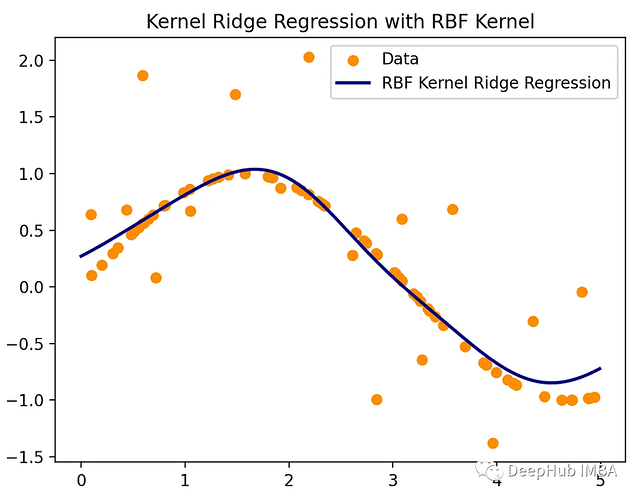

import numpy as np

from sklearn.kernel_ridge import KernelRidge

import matplotlib.pyplot as plt

np.random.seed(0)

X = np.sort(5 * np.random.rand(80, 1), axis=0)

y = np.sin(X).ravel()

y[::5] += 3 * (0.5 - np.random.rand(16))

# Create a test dataset

X_test = np.arange(0, 5, 0.01)[:, np.newaxis]

# Fit the KernelRidge model with an RBF kernel

kr = KernelRidge(

kernel='rbf', # use RBF kernel

alpha=1, # regularization

gamma=1, # scale for rbf

)

kr.fit(X, y)

y_rbf = kr.predict(X_test)

# Plot the results

fig, ax = plt.subplots()

ax.scatter(X, y, color='darkorange', label='Data')

ax.plot(X_test, y_rbf, color='navy', lw=2, label='RBF Kernel Ridge Regression')

ax.set_title('Kernel Ridge Regression with RBF Kernel')

ax.legend()

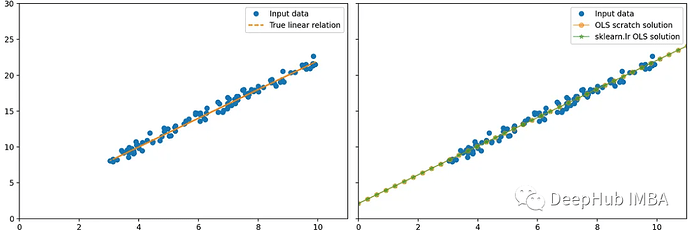

线性回归中的线性核

线性核化和线性回归

%matplotlib qt

import numpy as np

import matplotlib.pyplot as plt

from sklearn.linear_model import LinearRegression

np.random.seed(0)

n = 100

X_ = np.random.uniform(3, 10, n).reshape(-1, 1)

beta_0 = 2

beta_1 = 2

true_y = beta_1 * X_ + beta_0

noise = np.random.randn(n, 1) * 0.5 # change the scale to reduce/increase noise

y = true_y + noise

fig, axes = plt.subplots(1, 2, squeeze=False, sharex=True, sharey=True, figsize=(18, 8))

axes[0, 0].plot(X_, y, "o", label="Input data")

axes[0, 0].plot(X_, true_y, '--', label='True linear relation')

axes[0, 0].set_xlim(0, 11)

axes[0, 0].set_ylim(0, 30)

axes[0, 0].legend()

# f_0 is a column of 1s

# f_1 is the column of x1

X = np.c_[np.ones((n, 1)), X_]

beta_OLS_scratch = np.linalg.inv(X.T @ X) @ X.T @ y

lr = LinearRegression(

fit_intercept=False, # do not fit intercept independantly, since we added the 1 column for this purpose

).fit(X, y)

new_X = np.linspace(0, 15, 50).reshape(-1, 1)

new_X = np.c_[np.ones((50, 1)), new_X]

new_y_OLS_scratch = new_X @ beta_OLS_scratch

new_y_lr = lr.predict(new_X)

axes[0, 1].plot(X_, y, 'o', label='Input data')

axes[0, 1].plot(new_X[:, 1], new_y_OLS_scratch, '-o', alpha=0.5, label=r"OLS scratch solution")

axes[0, 1].plot(new_X[:, 1], new_y_lr, '-*', alpha=0.5, label=r"sklearn.lr OLS solution")

axes[0, 1].legend()

fig.tight_layout()

print(beta_OLS_scratch)

print(lr.coef_)

总结

编辑:文婧