本文介绍了机器学习中四种超参数优化方法:手动调参、网格搜索、随机搜索和贝叶斯搜索。

原文标题:机器学习 4 个超参数搜索方法、代码

原文作者:数据派THU

冷月清谈:

怜星夜思:

2、如何选择合适的超参数调优方法呢?

3、传统手动调参难度大,是否有具体技巧可以分享?

原文内容

来源:机器学习杂货店本文约1800字,建议阅读10分钟

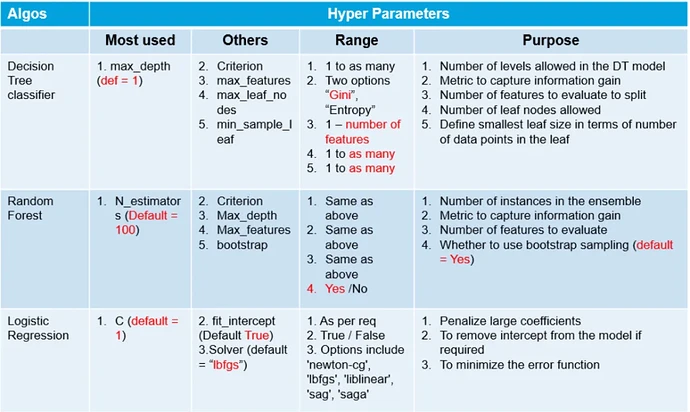

ML工作流中最困难的部分之一是为模型找到最好的超参数。ML模型的性能与超参数直接相关。

介绍

维基百科上说,“Hyperparameter optimization或tuning是为学习算法选择一组最优的hyperparameters的问题”。

超参数

内容

-

传统的手工调参

-

网格搜索

-

随机搜索

-

贝叶斯搜索

1. 传统手工搜索

#importing required libraries from sklearn.neighbors import KNeighborsClassifier from sklearn.model_selection import train_test_split from sklearn.model_selection import KFold , cross_val_score from sklearn.datasets import load_winewine = load_wine()

X = wine.data

y = wine.target#splitting the data into train and test set

X_train,X_test,y_train,y_test = train_test_split(X,y,test_size = 0.3,random_state = 14)#declaring parameters grid

k_value = list(range(2,11))

algorithm = [‘auto’,‘ball_tree’,‘kd_tree’,‘brute’]

scores =

best_comb =

kfold = KFold(n_splits=5)#hyperparameter tunning

for algo in algorithm:

for k in k_value:

knn = KNeighborsClassifier(n_neighbors=k,algorithm=algo)

results = cross_val_score(knn,X_train,y_train,cv = kfold)print(f’Score:{round(results.mean(),4)} with algo = {algo} , K = {k}')

scores.append(results.mean())

best_comb.append((k,algo))

best_param = best_comb[scores.index(max(scores))]

print(f’\nThe Best Score : {max(scores)}')

print(f"[‘algorithm’: {best_param[1]} ,‘n_neighbors’: {best_param[0]}]")

缺点:

-

没办法确保得到最佳的参数组合。

-

这是一个不断试错的过程,所以,非常的耗时。

2.网格搜索

from sklearn.model_selection import GridSearchCVknn = KNeighborsClassifier()

grid_param = { ‘n_neighbors’ : list(range(2,11)) ,

‘algorithm’ : [‘auto’,‘ball_tree’,‘kd_tree’,‘brute’] }grid = GridSearchCV(knn,grid_param,cv = 5)

grid.fit(X_train,y_train)#best parameter combination

grid.best_params_#Score achieved with best parameter combination

grid.best_score_#all combinations of hyperparameters

grid.cv_results_[‘params’]

#average scores of cross-validation

grid.cv_results_[‘mean_test_score’]

缺点:

3. 随机搜索

from sklearn.model_selection import RandomizedSearchCVknn = KNeighborsClassifier()

grid_param = { ‘n_neighbors’ : list(range(2,11)) ,

‘algorithm’ : [‘auto’,‘ball_tree’,‘kd_tree’,‘brute’] }rand_ser = RandomizedSearchCV(knn,grid_param,n_iter=10)

rand_ser.fit(X_train,y_train)#best parameter combination

rand_ser.best_params_#score achieved with best parameter combination

rand_ser.best_score_#all combinations of hyperparameters

rand_ser.cv_results_[‘params’]

#average scores of cross-validation

rand_ser.cv_results_[‘mean_test_score’]

4. 贝叶斯搜索

-

使用先前评估的点X1*:n*,计算损失f的后验期望。

-

在新的点X的抽样损失f,从而最大化f的期望的某些方法。该方法指定f域的哪些区域最适于抽样。

安装: pip install scikit-optimize

from skopt import BayesSearchCVimport warnings

warnings.filterwarnings(“ignore”)parameter ranges are specified by one of below

from skopt.space import Real, Categorical, Integer

knn = KNeighborsClassifier()

#defining hyper-parameter grid

grid_param = { ‘n_neighbors’ : list(range(2,11)) ,

‘algorithm’ : [‘auto’,‘ball_tree’,‘kd_tree’,‘brute’] }#initializing Bayesian Search

Bayes = BayesSearchCV(knn , grid_param , n_iter=30 , random_state=14)

Bayes.fit(X_train,y_train)#best parameter combination

Bayes.best_params_#score achieved with best parameter combination

Bayes.best_score_#all combinations of hyperparameters

Bayes.cv_results_[‘params’]

#average scores of cross-validation

Bayes.cv_results_[‘mean_test_score’]

另一个实现贝叶斯搜索的类似库是bayesian-optimization。

安装: pip install bayesian-optimization

总结

编辑:黄继彦